|

3/29/2024 0 Comments Cpu gpu temp monitor while gaming

This is especially the case for thermal pads used to cool memory chips since the default ones tend to tear apart very easily when the GPU is disassembled for the first time. Incorrect thermal paste/thermal pad configuration – if the cooling contacts are not applied properly, or are too old. For example, the Gigabyte Windforce RTX 2060 dual-fan version has “terrible” thermals, often reaching near 80☌ (176☏) compared to other regular models that can stabilize at 68-75 ☌ (154-167 ☏). Lower-tier cooler and fan design – heat can also somewhat accumulate if the cooler or fans are designed to only provide the bare minimum performance required. When overclocking with increased power limits, however, this can be a problem, specifically for the lower-tier versions of a certain GPU model (Asus ROG Strix version versus Asus Phoenix version, for example). On stock settings, this isn’t usually a problem since the GPU is designed by default to withstand such temperatures.

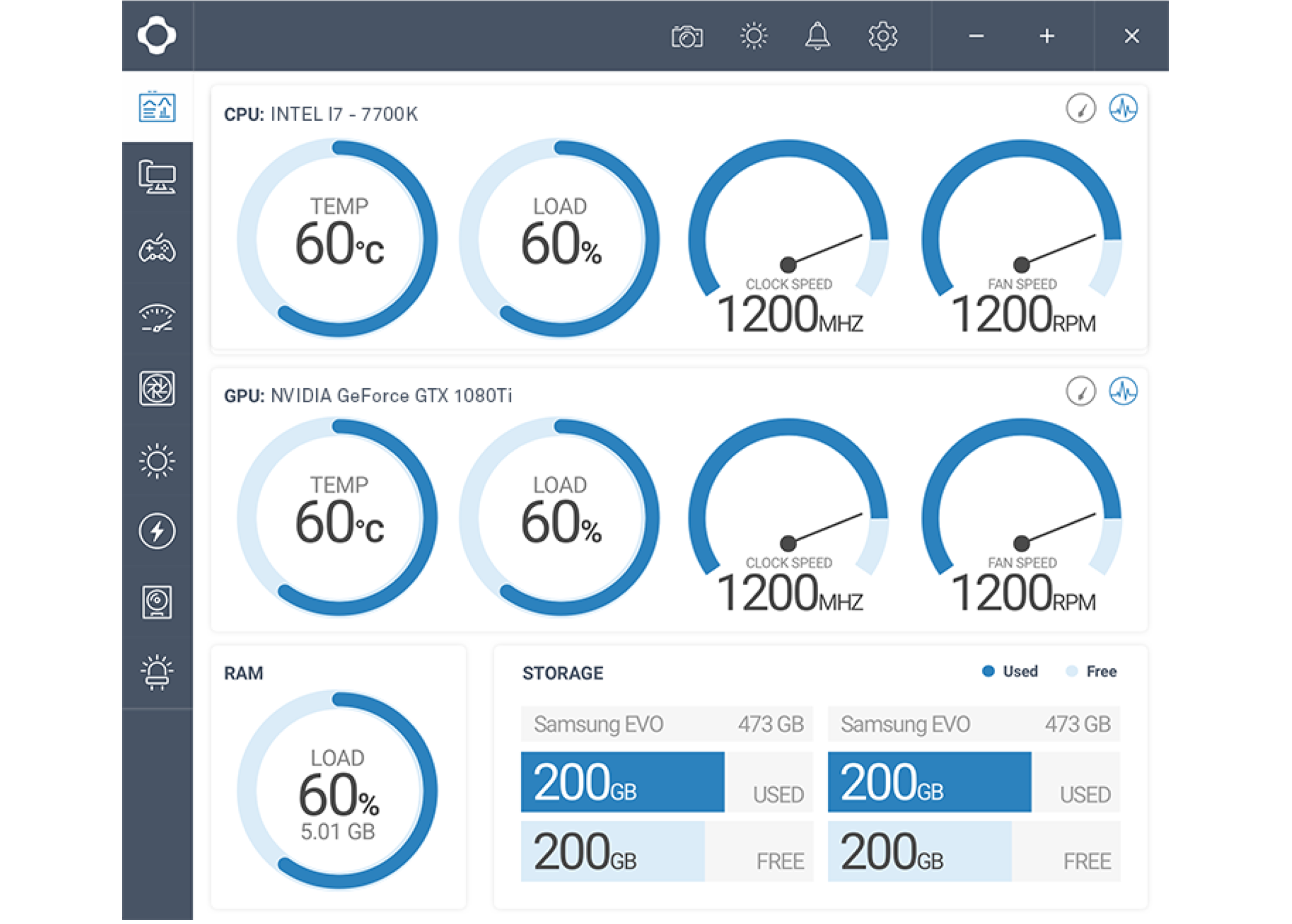

What causes high GPU temperatures?ĭrawn power (too) high – temperatures can abnormally increase if the energy used by the GPU exceeds the heat dissipation capability of the cooler. Also, keep in mind there are different technical and environmental factors that could affect results such as your ambient room temperature. Therefore, if you have a larger form factor system that is adequately configured you should see lower normal gaming and idle GPU temps. * Note: The PC we used to test normal gaming and idle GPU temperatures is a SFF (small form factor) Ncase ITX build containing a Ryzen 7 3700X CPU cooled by a Corsair iCUE H100i RGB Pro XT 240mm Water Cooling Kit and an RTX 2060 Super GPU. Our normal GPU temp while gaming tests resulted in an average GPU temp of 77.2☌, a minimum average GPU temp of 53.3☌, and a maximum average GPU temp of 85.8☌. Normal GPU temp while gaming test results Whereas high game resolution settings will result in higher GPU temps. Lower to medium resolution settings should result in lower GPU temps while gaming. The resolutions at which you play your games can also affect the GPU temps. On average, expect around 70+☌ (158+☏) for the whole graphics card, plus around 10 to 15 degrees Celsius more (+18° to 27☏) for hotspot values. Although GPUs are made to run at much higher temperatures, it is ideal to keep temperatures well below 80☌ while gaming to prevent heat degradation and a decrease in the lifespan of your GPU. What is a normal GPU temp while gaming?Ī normal GPU temperature range while gaming should ideally hover around 65° to 85☌ (149° to 185☏). This is to also prevent potential stability issues and to keep the hardware operating perfectly for as long as it possibly could. That being said, even before Tj max is reached, it is typically recommended to keep GPU temperatures from reaching too uncomfortably close to it. At temperatures beyond the Tj max, the GPU starts to significantly throttle itself down in order to prevent high temperatures from causing permanent damage. GPU temperature is important because it establishes the thermal performance limit of the hardware, which is typically referred to as Tj max or the maximum junction temperature of the GPU. The standard unit when measuring GPU temperature would be in degrees Celsius (☌). In most modern graphics cards, there is an option to view the hottest reading on any one sensor, which is called the “hotspot”. It is measured using sensors spread throughout strategic points on the hardware, so generally, it is a mean total of all temperature values, rather than just one single reading. GPU temperature is the mean average calculation of heat energy along the entire circuit board’s surface. We have conducted real real-world tests monitoring both gaming and idle GPU temperatures and have presented the results below. In this article, we will discuss what normal GPU temperatures are when gaming and idle. Thus, normal GPU temperature ranges largely depend on what it is doing at the moment, and how it is (physically) designed to dissipate heat. The higher the workload, the higher the power draw, and the more heat that accumulates. This, however, also generates heat on its circuit board and its chips. Graphics cards, much like CPUs, draw electric energy in order to drive their computational processes.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed